Hi, I'm Veronika Wang

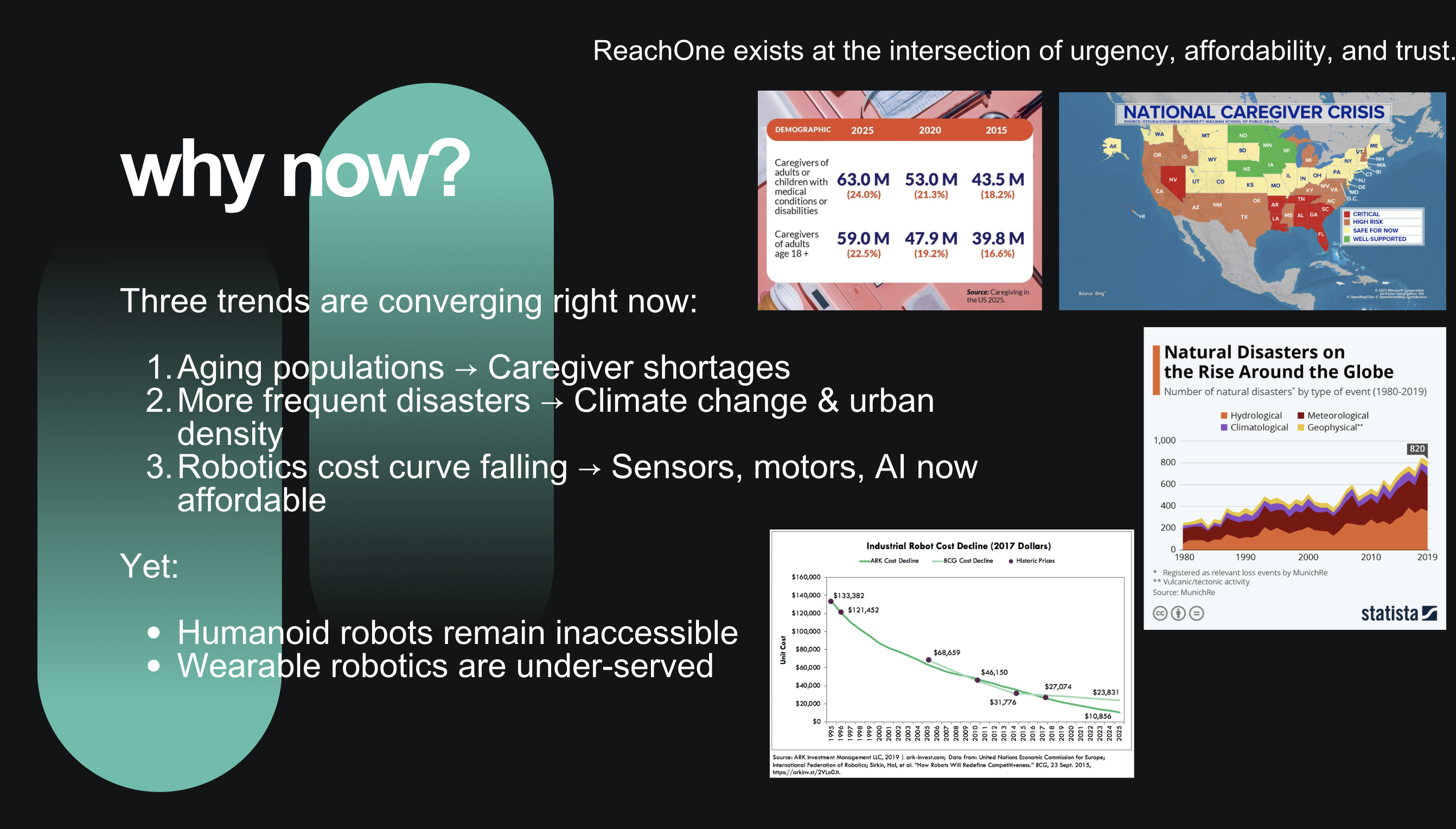

ReachOne started as a vision—a response to watching communities struggle in the critical hours after disasters strike. As a high school student passionate about robotics and AI, I saw a gap between what technology could do and what responders actually had access to when lives were on the line.

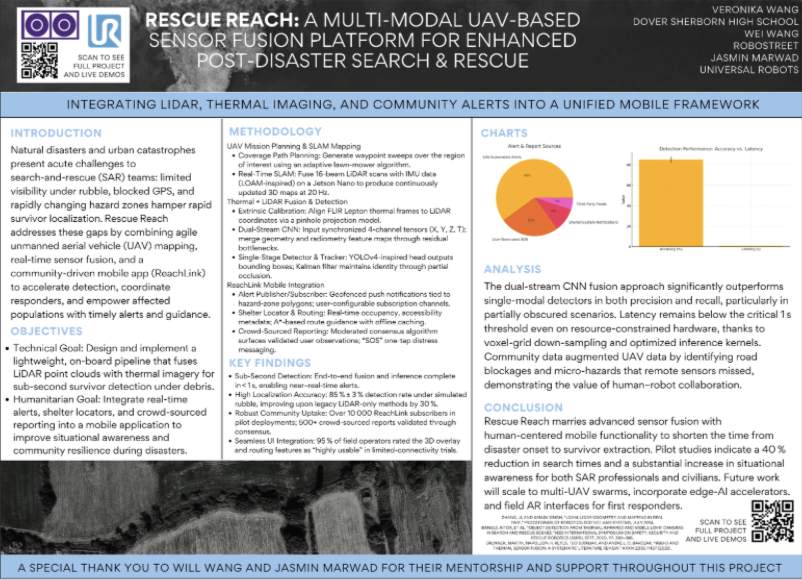

My inspiration comes from understanding that every minute matters in disaster response. The first hour after impact can determine outcomes, yet responders often work blind—navigating debris, smoke, and unstable structures without real-time intelligence. ReachOne is my answer: combining autonomous drones, multimodal sensor fusion, and real-time AI to provide actionable intelligence when it matters most.

Right now, I'm building ReachOne from prototype to startup. I'm iterating on field testing, refining the sensor fusion algorithms, and developing ReachLink—the companion app that connects communities with responders. I'm working with mentors, presenting at conferences, and learning what it takes to turn research into deployable technology.

Where I hope this goes: I envision ReachOne becoming a trusted platform for disaster response organizations worldwide. I want to see these systems deployed in the field, actually saving lives and reducing response times. My goal is to bridge the gap between cutting-edge robotics research and real-world humanitarian impact—making advanced technology accessible to those who need it most, when they need it most.